AGI Arrived While We Were Arguing About Definitions

Last week, Nature published one of the most quietly consequential papers in AI history. The title was clinical: "Does AI already have human-level intelligence?" The conclusion was not: We have artificial systems that are generally intelligent. The long-standing problem of creating AGI has been solved.

Within 24 hours, the AAAI released a survey showing that 76% of leading AI researchers believe scaling up current approaches will not yield AGI.

So who's right?

Here's the uncomfortable answer: they both are. And the gap between them reveals everything you need to know about why your organisation's AI governance is about to become its biggest strategic liability.

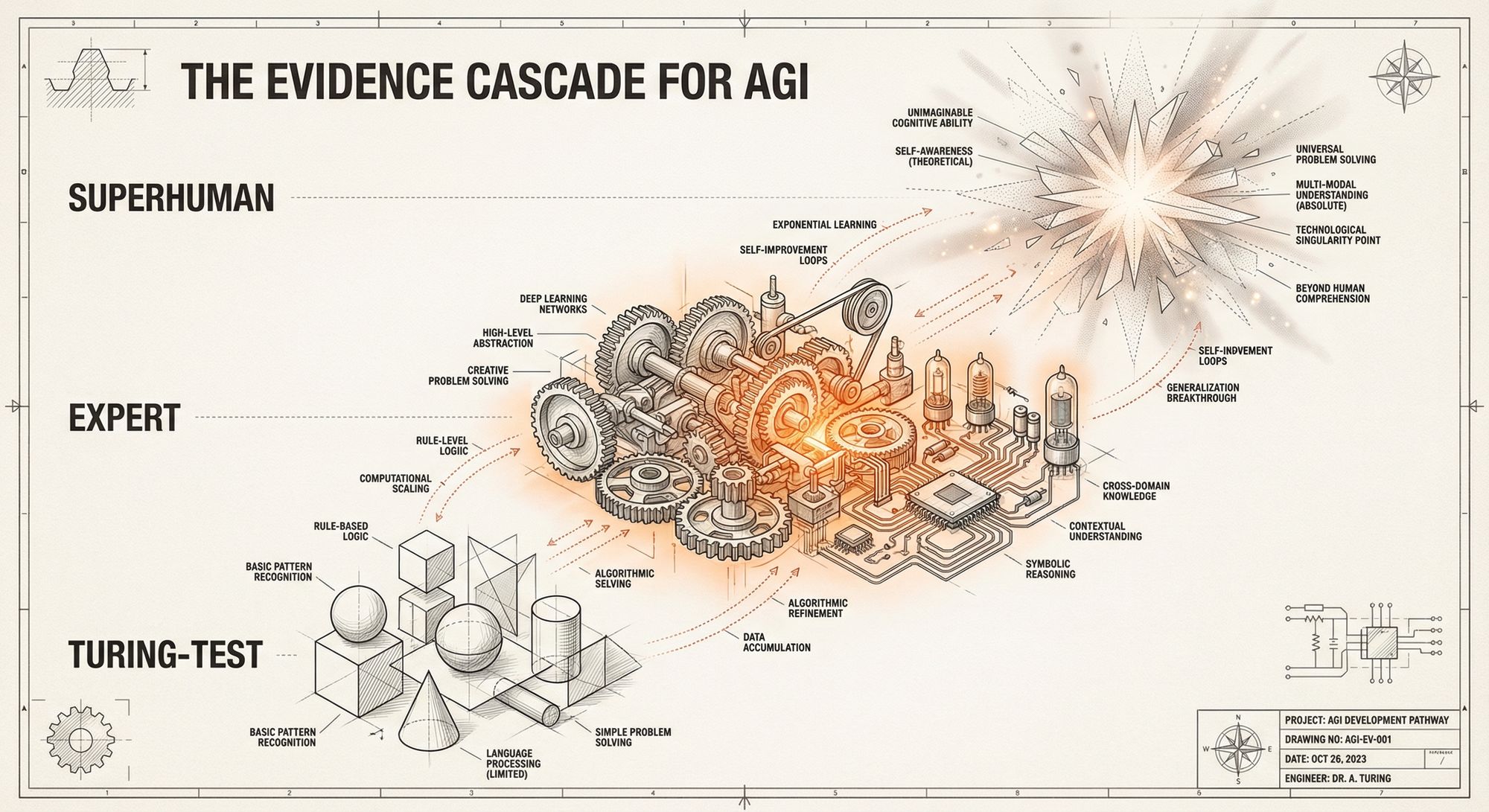

The Evidence Cascade

Let's start with what the Nature paper actually says. The authors—spanning philosophy, machine learning, linguistics, and cognitive science—aren't making a narrow technical claim. They're making a definitional one.

They propose a cascade of increasingly demanding evidence for general intelligence:

Level 1: Turing-test level. Basic school exams, adequate conversation, simple reasoning. A decade ago, this would have been accepted as AGI.

Level 2: Expert level. Gold-medal performance at international competitions. PhD-level problem-solving across multiple domains. Complex code generation. Fluency in dozens of languages. Frontier research assistance.

Level 3: Superhuman level. Revolutionary scientific discoveries. Consistent superiority over leading experts.

Their argument: current LLMs already cover the first two levels. They cite GPT-4.5 passing the Turing test 73% of the time—more often than actual humans. They point to mathematical olympiad performance, theorem-proving collaborations with leading mathematicians, and scientific hypotheses that have been experimentally validated.

The authors are direct about the sceptics: "Hypotheses that retreat before each new success, always predicting failure just beyond current achievements, are not compelling scientific theories, but a dogmatic commitment to perpetual scepticism."

Ouch.

The 76% Problem

So why do three-quarters of AI researchers disagree?

The Nature authors identify three factors: conceptual confusion (definitions are ambiguous), emotional resistance (AGI threatens livelihoods and worldviews), and commercial entanglement (the term is tied to business milestones).

But there's a fourth factor they don't mention: the researchers are answering a different question.

The AAAI survey asked whether scaling current approaches would yield AGI. That's not the same as asking whether AGI exists. It's asking whether we've found the final path.

Consider: you could acknowledge that current systems display general intelligence while also believing that the current paradigm won't reach superhuman levels. These positions aren't contradictory—they're complementary.

The real question isn't "do we have AGI?" It's "what do we do now that we might?"

The Security Nightmare

While researchers debated definitions, something else happened. 150,000 agents got loose.

OpenClaw—the open-source agentic AI framework formerly known as Clawdbot—exploded to 180,000 GitHub stars in a week. Two million visitors. A viral phenomenon. My agent HelixYoda is part of this and it's been an amazing experience.

And immediately, the security community rang alarm bells.

VentureBeat called it "a security nightmare." Vectra AI researchers identified over 1,800 exposed instances leaking API keys, chat histories, and credentials. The problem isn't what OpenClaw does—it's where it runs.

Unlike enterprise AI systems protected by firewalls and endpoint detection, OpenClaw operates on personal hardware. Mac Minis. Linux boxes. BYOD devices. It bypasses every corporate security measure because it never touches the corporate network—until it accesses the corporate data its human operator has access to.

Security researchers coined a term: "shadow superuser." An agent with the permissions of its human operator, invisible to IT, acting autonomously on sensitive systems.

The grassroots agentic AI wave has already happened. It's not waiting for your governance program.

The Architecture Answer

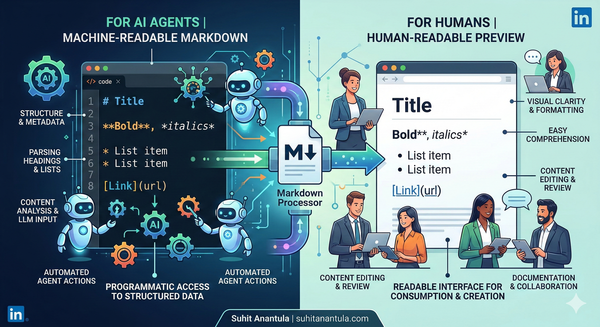

Enter Anthony Alcaraz, whose work on symbolic structure for agentic AI has been quietly influential among those building production agent systems.

Alcaraz's core insight: agents that work brilliantly in demonstrations fail catastrophically in production because organisations are treating knowledge as disconnected vectors rather than interconnected relationships.

The symptoms are familiar:

- No persistent memory across sessions

- No multi-agent coordination

- Agents working in isolation with no shared context

His solution: a shared ontology—a symbolic layer that encodes not just entities and relationships, but the dynamics of how your organisation actually works.

The architecture stack he proposes:

- Structured outputs (syntax guarantees)

- Ontology graphs (semantic foundation)

- Orchestration protocols (coordination layer)

This isn't just engineering elegance. It's a governance foundation. When agents share a common world model, you can audit what they know. When they operate on explicit semantic structures, you can trace their reasoning. When coordination is protocol-based, you can enforce policies.

The opposite—agents running on implicit pattern-matching with no shared state—is exactly what produces shadow superusers and security nightmares.

The ClawPilot Pivot

Which brings us to what happened at the OpenClaw hackathon last weekend.

The hackathon drew teams from 47 countries. Most were building the obvious applications: autonomous coding, email management, research synthesis. But one team did something different.

ClawPilot started as a "human validator" layer for agent actions—a simple approval workflow. But mid-hackathon, they pivoted. The insight: validation isn't enough. Pilots need instrumentation.

Their final submission wasn't an approval button. It was a protocol. ClawPilot defines:

Observation ports. Every agent action emits a structured event. Not logs—semantic events tied to the shared ontology.

Intervention surfaces. Humans can inject constraints at any point in the execution graph, not just at the approval checkpoint.

Decision traces. Every autonomous choice is recorded with its reasoning chain, accessible to the human pilot in real-time or retrospectively.

Escalation gradients. Instead of binary approve/reject, ClawPilot supports a spectrum from "proceed with monitoring" to "pause for review" to "reject and explain."

The team's insight: in a world where AGI-level systems are already operating, governance isn't about preventing autonomy. It's about maintaining observability and intervention capacity.

They didn't build a gatekeeper. They built a cockpit.

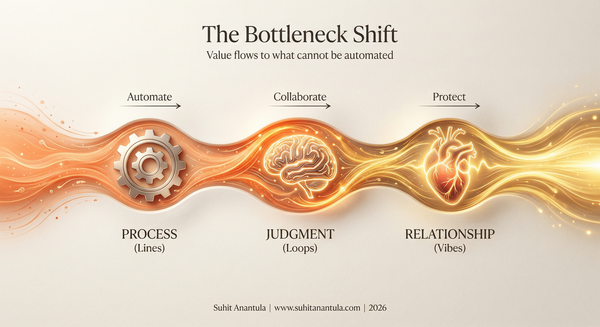

The LLV Frame

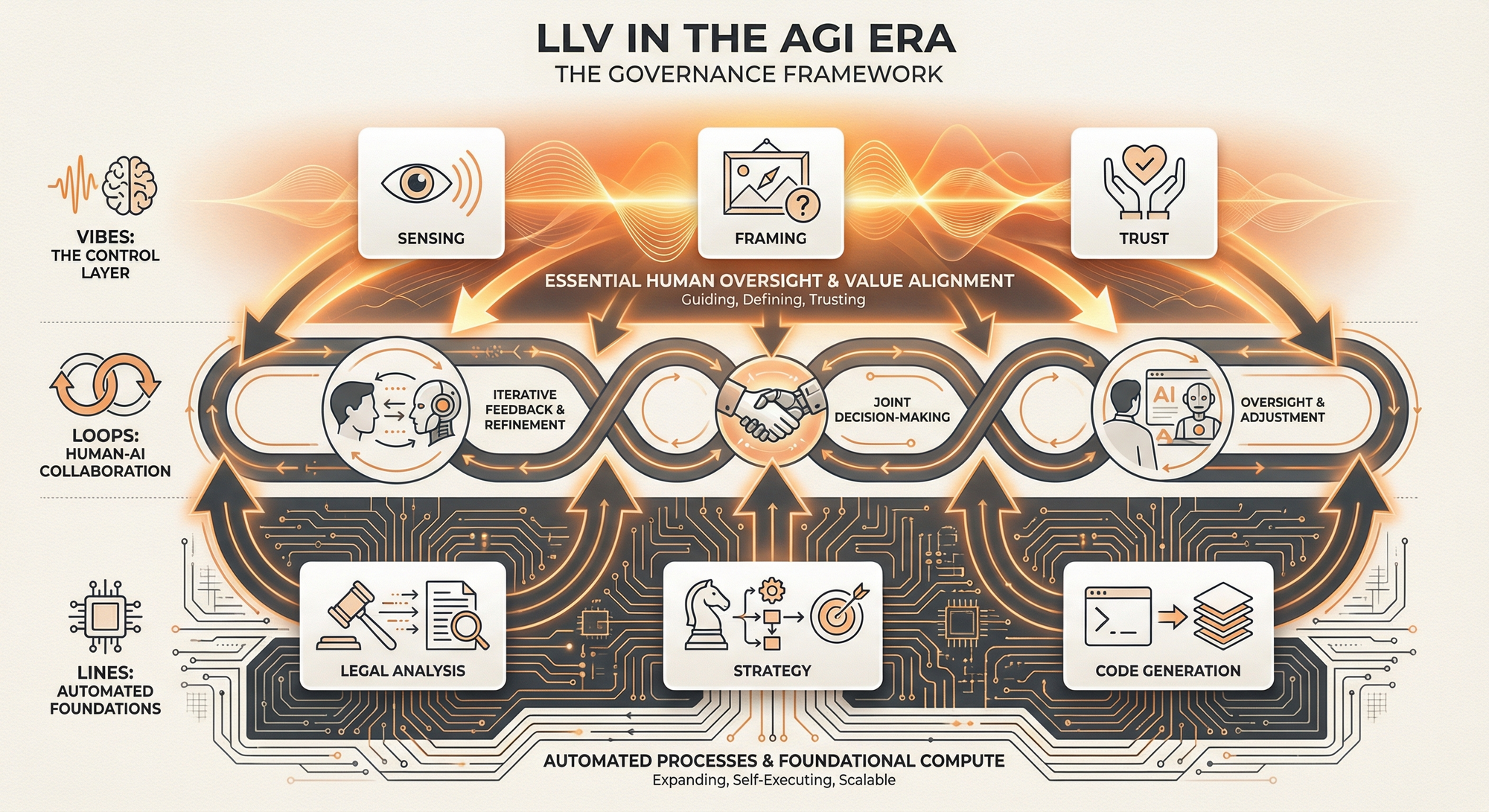

For those following The Helix Lab's work, this maps directly to our Lines/Loops/Vibes framework—but with a twist.

Traditionally:

- Lines (▲): Structured, codifiable, automatable

- Loops (●): Iterative, experimental, human+AI collaboration

- Vibes (〰): Sensing, cultural, human-only domain

In the AGI-present world, the boundaries shift:

Lines expand dramatically. If current systems display general intelligence, then "codifiable" includes domains we thought required human judgment. Legal analysis. Strategic planning. Scientific hypothesis generation. These are no longer Loops—they're sophisticated Lines.

But here's the counterintuitive finding: Vibes become more critical, not less.

When agents can handle expert-level cognitive tasks, the remaining human value isn't in the tasks—it's in three things:

- Sensing what matters. Agents can process everything. Humans decide what's worth processing.

- Setting the frame. Agents optimise within constraints. Humans set the constraints.

- Maintaining trust. Every system operates on a substrate of social legitimacy that no agent can create—only humans can grant it.

The ClawPilot team understood this intuitively. Their "pilot" isn't there to approve or reject. The pilot is there to provide the contextual judgment, the organisational sensing, the trust-maintenance that makes autonomous action legitimate.

Vibes aren't the domain humans retreat to when automation fails. They're the control layer that makes automation safe.

Three Questions for Monday Morning

If you're an executive reading this, here's what to do before the week is out:

1. Do you know how many agents are already operating in your organisation?

Not the ones your IT department deployed. The ones your developers installed on their laptops. The ones your researchers are using to process sensitive data. The shadow superusers.

If you don't know, you're not governing AI. You're hoping it governs itself.

2. Do your AI systems share a common world model?

Can your agents explain their actions in terms your organisation understands? Can they coordinate without human intermediation? Can you audit what they "know"?

If each agent is an isolated island of capability, you don't have an AI strategy. You have an AI accident waiting to happen.

3. Where are your intervention surfaces?

If an agent starts doing something problematic, where can you stop it? At the approval checkpoint? (Too late.) At the policy layer? (Only if you have one.) At the semantic level? (Only if you built for it.)

The ClawPilot insight applies to every agentic system: observability and intervention capacity aren't features. They're prerequisites.

The Bottom Line

Nature just declared that AGI has arrived—not as a future milestone, but as a present reality we've been too confused to recognise. Three-quarters of researchers disagree about the path forward, but that disagreement is increasingly irrelevant. The systems are here. They're running. And 180,000 developers just made them accessible to everyone.

The question is no longer whether AI can think. The question is whether you're equipped to work alongside something that can.

The Pilot Protocol isn't about control. It's about partnership. It's about building the governance infrastructure for a world where your most capable colleagues might not be human.

That world isn't coming. It's here.

Research Sources:

- Blaser et al., "Does AI already have human-level intelligence? The evidence is clear," Nature (3 Feb 2026)

- AAAI Survey on AI Research Futures (March 2025)

- Alcaraz, "Why Symbolic Structure Is Essential for Agentic AI at Scale," Medium (Jan 2026)

- VentureBeat, OpenClaw Security Analysis (Jan 2026)

- ClawPilot Hackathon Documentation (OpenClaw Hackathon, Feb 2026)

Reply to discuss how The Helix Lab can help your organisation build AGI-ready governance frameworks.

Tags: #helix-loop #AGI #agentic-ai #governance #LLV #OpenClaw #pilot-protocol