Why AI Won't Replace Everything (And Why That's Good News)

A Stanford economist just proved what we've been saying all along.

Not with opinions. With math.

Charles I. Jones, one of the world's leading growth economists, published a paper this month that should be required reading for every executive drowning in AI hype.

The title: "A.I. and Our Economic Future."

The finding: Even if we could automate every piece of knowledge work with infinite productivity, GDP would only rise by 50%.

Not 10x. Not infinite growth. Fifty percent.

And that's the ceiling.

This isn't pessimism. It's physics. And it explains why the organisations that will win the AI era aren't the ones automating everything — they're the ones identifying what can't be automated and investing there.

Let me show you why.

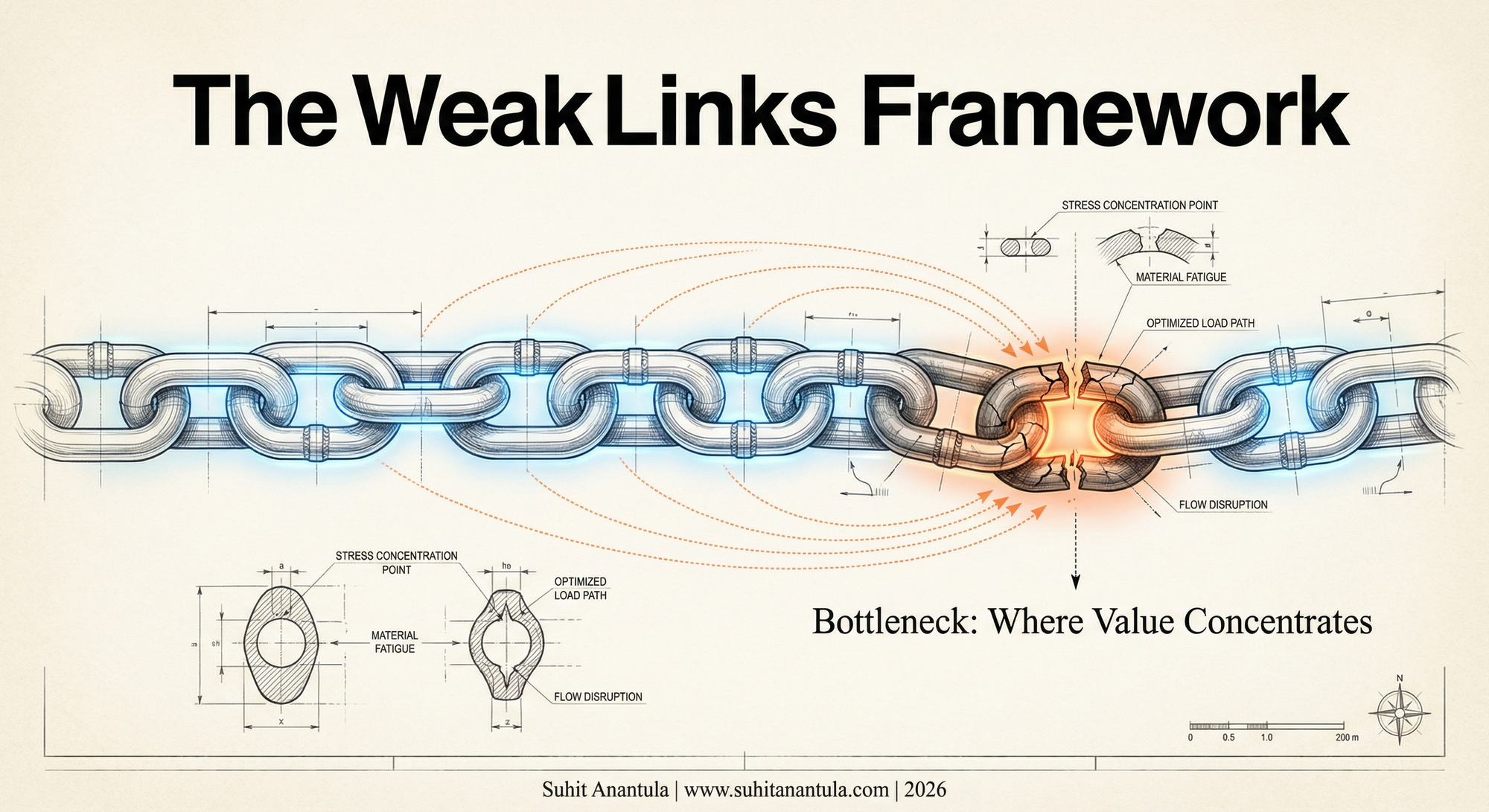

The Chain That Breaks at Its Weakest Point

Jones introduces what he calls the "weak links" framework. It comes from a branch of economics that treats production as a series of complementary tasks.

Here's the core insight:

When tasks are complements — when you need all of them to create output — your total production is limited by your worst bottleneck.

Think of it like a chain. You can make 99 links infinitely strong. The chain still breaks at link 100.

Jones formalises this with a beautifully simple formula. If you automate a task that costs share s of GDP to infinite productivity, your total gain is:

Gain = 1 / (1 - s)

Let's run the numbers:

| Task Category | Share of GDP | Infinite Automation Gain |

|---|---|---|

| Software | 2% | 2% |

| All IT | 5% | 5.3% |

| All knowledge work | 33% | 50% |

Infinitely productive software gives you a 2% gain.

This isn't a bug in AI. It's how complementary systems work. And every organisation is a complementary system.

The Radiologist Who Didn't Disappear

In 2016, Geoffrey Hinton — the "godfather of AI" — made a prediction that became infamous.

"We should stop training radiologists now," he said. "It's just completely obvious that within five years, deep learning is going to do better than radiologists."

It's now 2026.

There are more radiologists than ever. Their salaries have risen, not fallen.

What happened?

Jones explains it simply: Jobs are bundles of tasks.

A radiologist doesn't just read scans. They:

- Consult with patients about findings

- Coordinate with surgeons on treatment plans

- Make judgment calls in ambiguous cases

- Navigate institutional politics

- Provide emotional context for difficult diagnoses

- Train junior physicians

AI got extremely good at one task: reading scans.

What happened to the other tasks?

They became more valuable.

When AI automated scan reading, radiologists could spend more time on the tasks that require human judgment, emotional intelligence, and institutional knowledge. The bottleneck shifted. And in a weak-links world, the bottleneck is where the value lives.

AI didn't replace radiologists. It made them more valuable at being human.

The Universal Translator

I've spent the last year developing a framework I call LLV — Lines, Loops, and Vibes codified in my book The Helix Moment. It's designed to decode the rhythmic signature of any system, any framework, any organization.

When I read Jones's paper, I realised he'd given me the economic proof for something I've been teaching intuitively.

Lines, Loops, and Vibes aren't just organisational rhythms. They're also a hierarchy of automation difficulty.

Let me explain.

Lines: The Easiest to Automate

Lines represent structure. Process. Rules. Compliance. Checklists.

- "If X, then Y"

- "Follow these 12 steps"

- "Check these 47 boxes"

Lines are essential — they create reliability and scale. But they're also the most automatable. They're explicit. They can be codified. They can be turned into algorithms.

When people talk about AI replacing work, they're usually talking about automating Lines.

Loops: Harder to Automate

Loops represent learning. Iteration. Experimentation. Judgment developed through practice.

- "Try this, see what happens, adjust"

- "We've learned from 100 failed experiments"

- "My gut says this won't work, but I can't articulate why"

Loops are harder to automate because they require feedback across time. They need context that accumulates. They depend on tacit knowledge that's hard to transfer.

AI can run loops faster — that's what the human+AI team at Lloyds discovered. But the judgment about which loops to run, when to stop, what the results mean? That's still human.

Vibes: The Hardest to Automate

Vibes represent culture. Trust. Sensing. Emergence. The unspoken.

- "The room felt off, so I delayed the decision"

- "I knew the team wasn't ready for that level of autonomy"

- "Something in the market is shifting, even though the data doesn't show it yet"

Vibes are the least automatable because they can't be articulated. They're pre-linguistic. They exist in the space between people, not in individuals. They emerge from relationships that take years to build.

AI doesn't do Vibes.

It can analyse sentiment. It can detect patterns. But it can't sense when the CEO is lying to herself about why she's resisting a decision. It can't feel the trust deficit between two teams that's killing a merger. It can't intuit that a market is about to shift before any data confirms it.

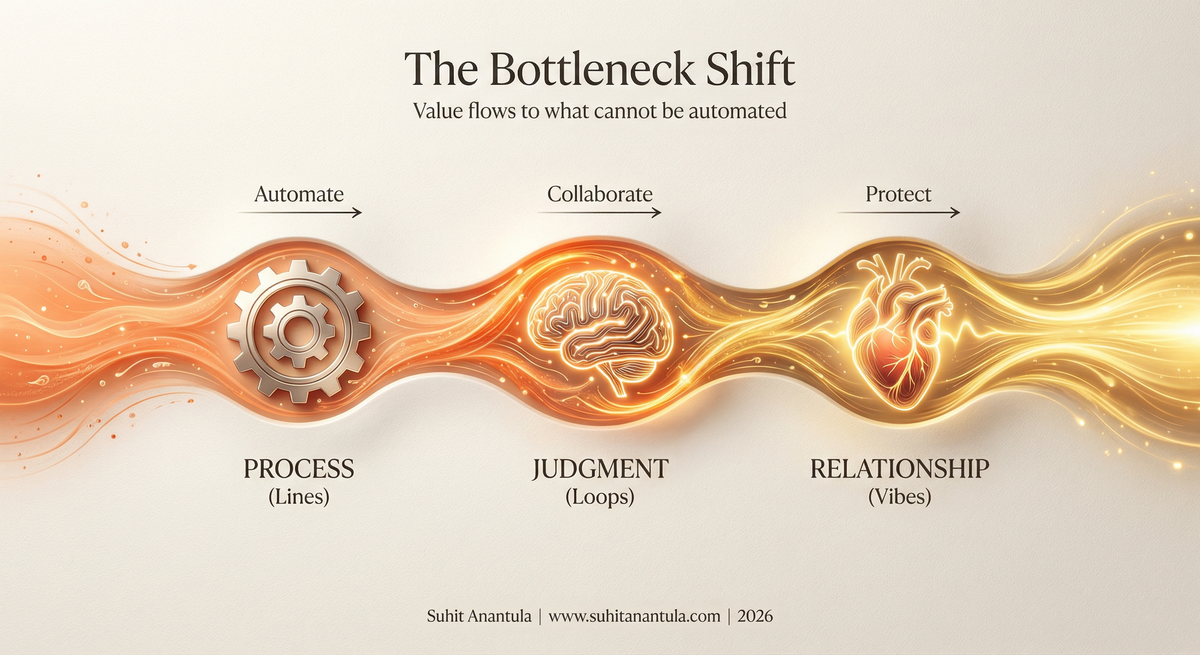

The Weak Links Are Loops and Vibes

Here's where Jones's economics and LLV converge into a single insight:

If you automate all the Lines work, Loops and Vibes become your bottleneck.

And in a weak-links world, the bottleneck is where all the value concentrates.

This is exactly what we saw at Lloyds Banking Group with Project Turing. The human+AI team didn't win because they had better AI. They won because they designed their workflow to let AI handle the Lines (rapid iteration, data synthesis, variation generation) while humans focused on the Loops (judgment about what to try next) and Vibes (sensing what would emotionally resonate with customers).

The blind panel judged their output "the most human."

Not despite the AI. Because of it.

More AI on Lines created more human value on Loops and Vibes.

A Country of Geniuses in a Datacenter

Jones quotes Dario Amodei, CEO of Anthropic, describing a near-future scenario:

"A country of geniuses in a datacenter."

Billions of AI instances. Performing any cognitive task. 24/7. At marginal cost approaching zero.

Sounds transformative, right?

It is. But Jones shows that even in this scenario, the economic impact is bounded by the tasks we can't automate.

Here's his projection: Even with explosive AI capabilities, the weak-links model predicts:

| Timeline | Output Gain |

|---|---|

| 20 years | 5% higher |

| 40 years | 20% higher |

Not the explosion you expected.

Why? Because the hard-to-automate tasks — the Loops and Vibes — constrain the whole system. The country of geniuses in the datacenter is infinitely productive at some tasks and completely useless at others.

The bottleneck shifts. It doesn't disappear.

What This Means for Your Organization

If you're an executive reading this, you might feel two things simultaneously:

- Relief — AI isn't going to automate your organization out of existence

- Pressure — Because if the bottleneck is shifting, you need to know where it's shifting to

Here's the strategic playbook:

1. Map Your Current Bottlenecks

Use LLV as a diagnostic:

- Where are you Lines-heavy? These are your automation opportunities. Go fast here.

- Where are you Loops-heavy? These need human+AI collaboration. Design better feedback systems.

- Where are you Vibes-heavy? These need protection. Don't try to automate trust.

2. Invest in What Becomes Scarce

Economics 101: Value flows to scarcity.

If AI makes Lines-work abundant, what becomes scarce?

- Human judgment (Loops)

- Relational trust (Vibes)

- Synthesis across domains (Vibes + Loops)

- Emotional resonance (Vibes)

- Institutional knowledge (Loops)

These are your hiring priorities. Your training investments. Your leadership development focus.

3. Design for Complementarity, Not Replacement

The radiologists didn't disappear because their jobs were bundles of tasks. AI took one task. The others remained — and became more valuable.

Ask yourself: What's the bundle?

For every role you're considering automating, decompose it:

| Task | Type | Automate? | Post-automation value |

|---|---|---|---|

| Data entry | Lines | Yes | Near zero |

| Trend analysis | Lines | Yes | Near zero |

| Judgment calls | Loops | Partial | Higher |

| Client relationships | Vibes | No | Much higher |

| Team culture | Vibes | No | Much higher |

The roles that survive aren't the ones with no automatable tasks. They're the ones where the non-automatable tasks justify the whole position.

4. Build Your Co-Intelligence Capability

This is the real unlock.

A co-intelligent organisation doesn't ask "What can AI replace?"

It asks "How do we create value that neither intelligence could create alone?"

Jones's weak-links model proves this mathematically: automation without complementarity yields bounded gains. But complementarity — human+AI creating value together — can push the frontier in ways pure automation can't.

The organisations that win will be the ones that design for co-intelligence at every level.

The Question That Matters

Jones asks a provocative question in his paper:

"When AI is better at developing growth models than I am, where will I find meaning?"

It's a real question. If you're a knowledge worker — and you're reading this, so you probably are — AI will eventually outperform you on many tasks you currently consider "yours."

But here's the thing: Jones is a growth economist. He's spent his career building models. And his own model shows that the bottleneck will shift to something else.

Maybe it's teaching the next generation what the models mean.

Maybe it's sensing which questions are worth modelling.

Maybe it's building the institutional trust required to implement the model's recommendations.

The work doesn't disappear. It transforms.

And the organisations that help their people navigate that transformation — that invest in the Loops and Vibes while automating the Lines — will attract and retain the talent that makes the difference.

The Hype Meets the Math

Every AI conversation I have with executives follows the same arc:

First: "AI is going to change everything."

Then: "But we don't know exactly how."

Finally: "So we're doing pilots and waiting."

Jones's paper gives us something better than waiting: a model.

Not a prediction. A structure for thinking.

The weak-links framework says:

- Automation yields bounded gains

- The bottleneck shifts, not disappears

- Value concentrates in the hardest-to-automate tasks

- Complementarity beats replacement

The LLV framework translates that into action:

- Lines are your automation opportunities

- Loops are your collaboration design challenges

- Vibes are your human investment priorities

Together, they give you a way to cut through the hype without dismissing AI's potential.

AI will transform everything. And it will transform nothing.

Both are true. The transformation is real. The replacement is bounded.

The question isn't whether AI will change your organization. It's whether you'll redesign your organization to capture the value that AI creates — and protect the value that only humans can provide.

Three Questions for Monday Morning

1. Where are your Lines?

List the five most procedural, rule-based, codifiable processes in your organization. These are your immediate automation candidates. If you're not moving fast here, your competitors are.

2. Where are your Vibes?

Identify the three relationships, trust dynamics, or cultural sensing capabilities that make your organization work. These are your protection priorities. Don't let AI hype convince you to optimise them away.

3. What's your bottleneck today — and what will it be in three years?

If AI automates your current bottleneck, where does the constraint shift? That's where your strategic investment should go.

The weak links framework isn't pessimistic. It's clarifying.

It shows us exactly where to look.

If you want to map your organisation's LLV signature and identify where the bottleneck will shift, reply to this email or leave a comment. I'm running diagnostic sessions for executive teams navigating the AI transition.